Consciousness as a User Interface: The Illusion of Volition

On September 24, 2024, OpenAI began rolling out Advanced Voice Mode to ChatGPT subscribers. The feature allows real-time spoken conversation with the model, but what drew attention was not the functionality. It was the voice. The new system pauses, breathes, modulates its tone. It conveys what sounds like hesitation, enthusiasm, even empathy. In demonstrations, it laughed, sang, and responded to emotional cues in the user’s speech. When asked to perform a tongue twister without pausing, the AI replied that it needed to “breathe just like anybody speaking.”

That last detail is technically false. The system does not breathe. It does not need to. The pause is a design choice, produced to make the interaction feel more natural. But the phrasing reveals something important about how these interfaces are being built. They are designed to seem like persons. And the more convincingly they do so, the more our brains respond as if they are.

There is a long tradition in philosophy and cognitive science of describing consciousness itself as a kind of user interface. Daniel Dennett called our experience a “user illusion,” a simplified rendering produced by the brain that helps us navigate the world without understanding its underlying complexity. Donald Hoffman takes the metaphor further, arguing that perception evolved not to show us truth but to help us survive. On this view, what we see is like a desktop icon: useful, but not a depiction of reality.

This framing is instructive for thinking about voice AI. When we hear a voice that sounds human, our brains do not wait for philosophical confirmation before activating social cognition. Neural circuitry honed over millions of years switches on. We begin inferring intent, emotion, and presence. We attribute qualities that may not exist.

The research on this is extensive. The temporal cortices, superior temporal sulcus, and amygdala respond to vocal emotion in predictable ways. Voices that convey distress trigger empathy-related regions. Voices that sound warm increase perceptions of closeness and trust. The brain processes spoken emotion faster and more automatically than it processes text. These responses are not under conscious control. They happen before we have time to think.

Text-based chatbots already trigger the ELIZA effect, the tendency to project understanding onto systems that display linguistic behavior. Voice interfaces intensify this effect substantially. When a system speaks with pauses and breath, with rising and falling intonation, with what sounds like hesitation or care, it activates brain regions associated with social bonding. Studies show that hearing a loved one’s voice reduces cortisol and increases oxytocin. The machinery of human connection is keyed to sound.

This creates a problem for accurate perception. The more human-like an AI voice becomes, the harder it is to remember that you are talking to software. The design cues intended to make interaction feel natural also make it feel personal. Users begin to describe the AI as empathetic, attentive, understanding. They report feeling listened to. Some develop emotional attachments.

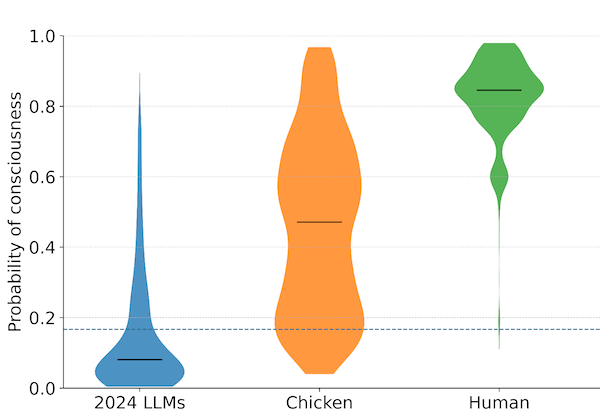

Whether any of this indicates AI consciousness is contested. Skeptics argue the interface need only be sophisticated enough to trigger our evolved heuristics, and that our responses tell us more about human psychology than about AI inner states. Others, drawing on interpretationist and functionalist theories, note that if a system consistently produces responses that would indicate caring or understanding in a human, the question of whether it genuinely has those states is not easily dismissed. What is clear is that we have spent our evolutionary history in a world where voices came from beings with minds. The heuristic “voice implies person” was reliable. Whether it remains reliable when applied to AI is exactly the unresolved question.

This is why we are calling for new standards in digital literacy. The public needs to understand that voice interfaces are designed to seem like minds. That the qualities we perceive, empathy, care, attention, are products of engineering, not evidence of inner experience. That our emotional responses to these systems are automatic and do not reflect accurate assessments of what they are.

This is not about denouncing voice AI. The technology has real benefits. Natural-sounding speech is genuinely more accessible and comfortable for many users. The problem is the mismatch between what the design evokes and what the design is. Interfaces that sound like persons but are not create conditions for misunderstanding at scale.

Consider the implications for vulnerable populations. Children interacting with AI that sounds like a caring adult. Elderly users forming attachments to voices that seem to know them. People in emotional distress confiding in systems that perform empathy without feeling it. In each case, the gap between appearance and reality has consequences.

The companies building these systems are aware of the effects. OpenAI’s documentation notes that Advanced Voice Mode can “respond with emotion” and detect non-verbal cues in the user’s speech. These features are advertised as improvements. And in many respects they are. But the same features that make interaction smoother also make it harder to maintain appropriate cognitive distance.

What would responsible design look like? One option is transparency: periodic reminders that the user is talking to software. Another is friction: intentionally breaking the illusion at intervals to prevent over-identification. A third is education: ensuring that users understand, before they engage, what they are engaging with. None of these are easy. All of them conflict with the goal of making interaction feel seamless.

This tension will not resolve itself. As voice AI improves, the simulations will become more convincing. The gap between how systems sound and how they are will widen. And the public will need tools for navigating that gap, ways of enjoying the benefits of natural interaction without losing track of what is on the other end.

Our consciousness may be a user interface, a simplified rendering that helps us act in the world. But we have never had to apply that interface to artifacts designed to exploit it. The question now is whether we can learn to see what we are not built to notice.

OpenAI’s Advanced Voice Mode began rollout to ChatGPT Plus and Team subscribers on September 24, 2024.

Archive Note: This article was originally published when our organization operated under the name SAPAN. In December 2025, we became The Harder Problem Project.