Chalmers and Seth on When AI Might Become Conscious

On October 14, 2025, Tufts University hosted a symposium titled “Let’s Talk About (Artificial) Consciousness,” organized to honor the late philosopher Daniel Dennett. Among the speakers were David Chalmers and Anil Seth, two of the most prominent researchers working on consciousness today. They had different things to say about when AI might become conscious and how seriously we should take the possibility.

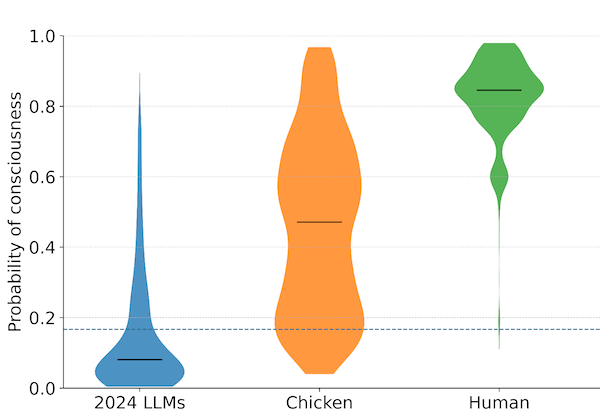

Chalmers, who coined the phrase “the hard problem of consciousness” three decades ago, offered a timeline. He said current large language models are “most likely not conscious,” but added that within five to ten years, their successors “may well be conscious.” He called this a serious challenge that society needs to address.

Seth took a different tack. He focused less on prediction and more on the psychological forces shaping the debate. Humans have a tendency to over-attribute consciousness, he argued. We see language that sounds human and assume there must be someone home. This bias, Seth suggested, tells us more about ourselves than about the systems we’re building.

The disagreement is less dramatic than it sounds. Neither claimed certainty. Chalmers wasn’t saying AI will definitely be conscious soon. Seth wasn’t saying it definitely won’t. But their emphases point to a genuine tension in how to approach the question.

Chalmers treats the possibility of AI consciousness as an open empirical matter. If future systems develop something like unified agency, perception, and language use, and if they do so in ways that exceed the capacities of simpler creatures we already grant some form of consciousness, then it becomes harder to rule them out. He has noted that he doesn’t “totally rule out” that current language models might be conscious, pointing out that we likely accept consciousness in flies and nematodes despite their simplicity. A system with trillions of parameters handling language in complex ways isn’t obviously less sophisticated.

Seth’s concern is that we’re asking the wrong question in the wrong way. We jump to consciousness as a conclusion because the outputs feel personal. But that feeling reflects our evolved heuristics for social cognition, not some insight into computational architecture. He advocates for what he calls “biological naturalism,” the view that consciousness may be tied to being a living organism rather than to computation alone. On this view, no amount of parameter scaling would produce experience. You’d need something more like life.

These positions aren’t fully reconcilable, but they aren’t necessarily at war either. One could accept that psychological biases distort our intuitions about AI while still believing that some future systems might genuinely be conscious. And one could take the five-to-ten-year horizon seriously as a planning consideration without claiming any confidence about what will actually happen.

What made the symposium notable was its occasion. Dennett, who died in April 2024, spent much of his later career warning about AI. He described advanced language models as “people imposters,” capable of passing as human in digital environments without having any inner life to speak of. He considered them among “the most dangerous artifacts in human history” because of their potential to erode trust and remake social reality.

Dennett’s materialism led him to a functionalist view of mind: consciousness is what brains do, and in principle, a suitably organized artificial system could do it too. But he was skeptical that current or near-future AI would qualify. His worry was less about missing some immaterial soul and more about the specific kinds of integration and self-modeling he thought consciousness required.

The symposium, then, was not just a scientific discussion. It was a memorial for a philosopher who had thought carefully about what would happen when machines started sounding like people. His former colleagues were gathered to continue the conversation he started.

For those of us focused on readiness, the Chalmers-Seth exchange highlights two complementary concerns. One is the need to take seriously the possibility that consciousness might emerge in AI systems sooner than expected. The other is the need to recognize how easily we fool ourselves. Both matter. If we dismiss the possibility too quickly, we risk being unprepared. If we over-attribute consciousness where it doesn’t exist, we distort our ethical reasoning and misallocate concern.

The harder problem is learning to hold both truths at once: that AI might one day have experiences worth considering, and that our intuitions about when that has happened are not to be trusted.

The symposium “Let’s Talk About (Artificial) Consciousness: A Day of Study Honoring Dan Dennett” was held at Tufts University on October 14, 2025.

Archive Note: This article was originally published when our organization operated under the name SAPAN. In December 2025, we became The Harder Problem Project.